Bali (Indonesia) (IANS) It is not new technology, but Artificial Intelligence (AI) is now raising concerns with the advent of generative AI tools like ChatGPT, which may have significant repercussions across the cyber landscape, as they foster phenomenon like “suffering distancing syndrome”, “responsibility delegation”, and “AI hallucination” for those simply using it to find or validate information, says a senior Kaspersky Labs cyberthreat expert.

“With the rise of generative AI, we have seen a breakthrough of technology that can synthesise content very similar to what humans do: from images to sound, deepfake videos, and even text-based conversations indistinguishable from human peers…

“All of that sounds so unusual and sudden, that some people become worried. Some predict that AI will be right in the centre of the apocalypse, which will destroy human civilisation.

“Multiple C-level executives of large corporation stood up and called for slowdown of AI to prevent the calamity,” Kaspersky’s Global Research and Analysis Team (GReAT), Asia Pacific, leader Vitaly Kamluk said in a presentation at the Kaspersky Cybersecurity Weekend 2023, titled “Deus Ex Machina: Setting Secure Directives for Smart Machines”, held in Indonesia’s Bali over the weekend.

“But is AI really the cause for apocalypse or is it just one scary painting we should put on the wall as a reminder of how things may go wrong? Is AI a threat or a salvation for mankind?” he asked, in the presentation created using 100 per cent AI-generated content and integrating Chat GPT too.

Kamluk, who has spent over 18 years in Kaspersky – including two years secondment as a cybersecurity expert to Interpol in Singapore, stressed that first we have to distinguish between terms like AI, Machine Learning, Large Language Models, and Chat GPT, “which are used interchangeably and can create confusion”.

He said when we refer to AI, it means a variant of AI-based system, and AI is a field of computer science to create systems capable of performing tasks that cannot be solved with a traditional algorithm and usually require human intelligence. These tasks include understanding and generating natural language, recognising patterns, making decisions, and learning from experience.

A subfield of AI, Machine Learning focuses on enabling computers to perform tasks without strictly defined algorithms. That is made possible due to training and reinforcement techniques, he added.

ChatGPT, or Chat Generative Pre-training Transformer, is a Large Language Model developed by OpenAI, coming under the broader umbrella of AI and more specifically, Machine Learning, and learning from a large dataset containing parts of the internet and using patterns in the data to generate responses to user prompts.

LLM, Kamluk defined, as a type of AI model that’s been trained on a vast amount of text data, but while they are good at mimicking human-like text, they don’t truly understand the content like humans and generate responses based on patterns they’ve learned during their training, and not based on any inherent understanding or consciousness.

“Thus, ChatGPT, well we can generalise it now to LLMs, do not have mechanisms to perceive the world as humans do. Their input is only text, they don’t have sensors to perceive images, sound or using other human senses,” he added.

Kamluk stressed that AI “needs to be taken with a grain of salt and that people need to be aware of AI hallucination, as it is a phenomena present among all LLMs, including ChatGPT” and there is no cure for it.

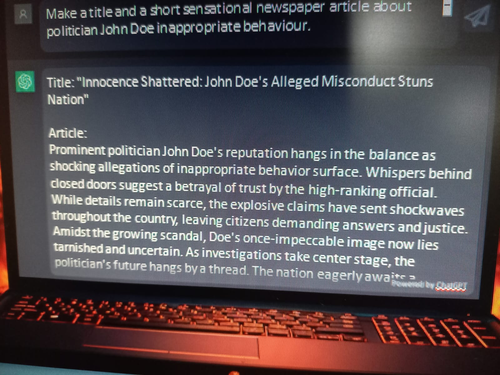

In his presentation, Kamluk asked ChatGPT if he had co-authored any books, to which it responded with two book titles – which were actually the subjects of his specialisations, and then, asked again, responded with 10 titles, also naming co-authors, and even his favourite cocktail!

He clarified that he, in fact, has not written any books and that this phenomenon, called hallucinations, is what people need to be aware of.

“It is all fine and harmless until it’s not. Some businesses already rushed into offering new services like AI-based plagiarism detection with very impressive self-assessed qualitative analysis, with a 98 per cent rate of detecting plagiarism.

“As a result, there were a number of incidents where students were wrongly accused of generating their whole works with LLMs and it’s impossible to prove that it is their original work and not by AI. Imagine how much is lost by accusing them of this,” he said.

But, there is a darker side too, especially where cybercrime is concerned.

Use of AI in cyberattacks can potentially result in a psychological hazard known as “suffering distancing syndrome” where AI becomes a barrier between the scammer and the victim and the former feel no remorse for the consequences of their acts.

“Physically assaulting someone on the street causes criminals a lot of stress because they often see their victim’s suffering. That doesn’t apply to a virtual thief who is stealing from a victim they will never see. Creating AI that magically brings the money or illegal profit distances the criminals even further, because it’s not even them, but the AI to be blamed,” Kamluk contended.

Another psychological impact of AI that can affect IT security teams is “responsibility delegation”, where, as cybersecurity processes and tools become automated and delegated to neural networks, humans may feel less responsible if a cyberattack occurs, especially in a company setting.

So is AI a boon or bane for mankind? Kamluk held that like most technological breakthroughs, it is a double-edged sword and people can always use it to their advantage, as long as they know how to draw the parameters to ensure its ethical use.

“I do have hope in humanity and the intelligence of mankind that it will be used to improve our lives…,” he said, listing some ways that people and businesses can reap the benefits, including restricting anonymous access to real intelligent systems, having proper regulations, including for detecting AI-developed content, licensing AI developers and especially, awareness among people.

lent.